May 6

Cloud CMS lets you generate preview images (often called thumbnails) for any content item stored in your repository. This generation can be performed ahead of time via content modeling or it can be done in real-time using a simple URL call.

Content Nodes

In Cloud CMS, everyone content item you create is referred to as a node. A node is a JSON document that can have any structure you’d like.

That is to say, you can drop any valid JSON document you’d like into Cloud CMS and the product will automatically capture it, version it, index it and so forth. It’ll also give your node a unique ID (provided by the _doc field).

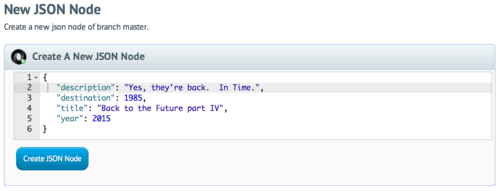

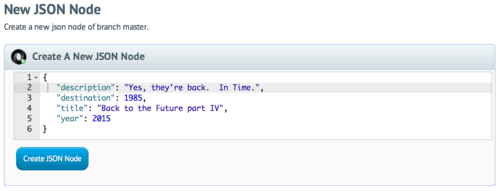

Every node lives on a repository branch. You might be working on repository repo1 and branch master. You might use the Cloud CMS Console to create a simple JSON node:

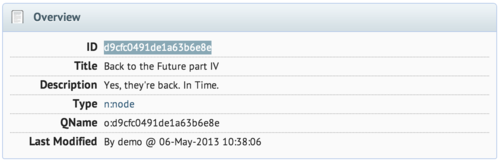

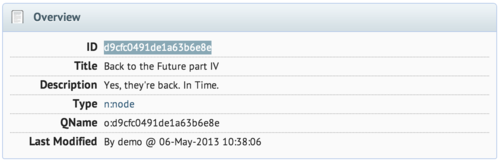

When we create this node, Cloud CMS automatically gives us a Node ID.

In this case, it gives us d9cfc0491de1a63b6e8e. It is available in our JSON document and we can use this ID to reference our node anytime we want to.

Note: Fans of the 80’s will no doubt identify the absurdity of a document promoting a movie that should not be made. Still, we’re here to have some fun, so let’s go with it!

REST API

The Cloud CMS REST API lets us work with Cloud CMS content via HTTP commands. If we’re authenticated against our Cloud CMS platform or if our platform offers guest-mode access, we can reference our node like this:

This will give us back our JSON document. It’ll also give us a few additional and interesting properties.

{

"_doc":"d9cfc0491de1a63b6e8e",

"_features":{

"f:audit":{},

"f:titled":{},

"f:filename":{

"filename":"Back to the Future part IV"

},

"f:geolocation":{},

"f:indexable":{}

},

"_qname":"o:d9cfc0491de1a63b6e8e",

"_type":"n:node",

"description":"Yes, they're back. In Time.",

"destination":1985,

"title":"Back to the Future part IV",

"year":2015

}

We won’t delve into this too deeply. But it suffices to say that our new node has automatically been given a dictionary type n:node and a definition qname o:d9cfc0491de1a63b6e8e. This allows us to use Cloud CMS’ content modeling facilities (if we choose to) to provide validation logic and behaviors on top of our content.

We also see that it has been outfitted with a number of features such as f:audit, f:geolocation and f:indexable. These features inform the behavior of our content and tell Cloud CMS that:

- access to this content object should be recorded in the audit logs for our platform

- a geolocation index should be maintained for this content so we can look it up by latitude and longitude (if desired)

- this content should be indexed for search

Each feature can be configured. By default, no configuration is provided which allows Cloud CMS to fall back to its default way of handling each feature.

Attachments

Now on to preview images.

Every node in Cloud CMS supports zero or more attachments. An attachment is a binary object that is associated with the node.

You can think of attachments kind of like you think of attachments for an email. Every email can have many attachments. And the same is true for nodes. A node might have zero attachments. If so, we think of this as “content-less” content. Nothing wrong with that. However, other nodes might have a single attachment.

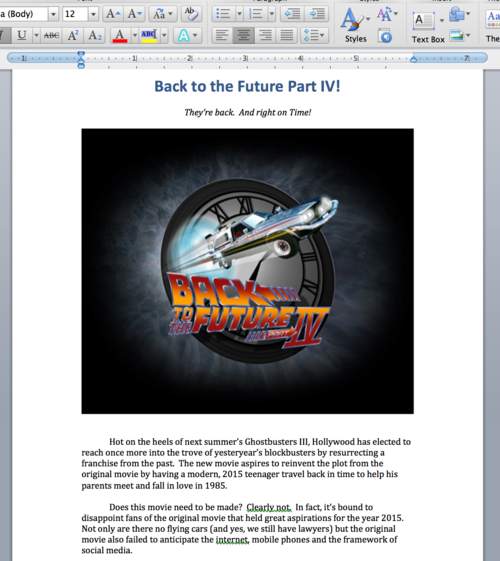

For example, a Word document would be stored in a node (with some JSON) and have a single default attachment which contains the Word document itself.

Suppose we upload a Word document as an attachment for our node. The word document might provide a movie description along with some images.

We can then reference this Word document via HTTP:

This will stream the Word document back to us. Very useful!

Previews

Now let’s suppose that we have a web site. And on our web site, we’d like to provide a link to this Word document along with a thumbnail for purposes of preview. It’s easy. We just let Cloud CMS do the thumbnail generation for us.

This will generate a thumbnail image of the default attachment with a max width of 320 in image/jpeg format. Cloud CMS will produce a preview image of the first page of the Word document.

The preview image will be saved as a new attachment on the node. The new attachment will be called thumb320. The thumbnail will only be generated on the first call. Subsequent calls will re-use the previously generated attachment.

Options

The URL-based preview generator provides all kinds of neat options. Using request parameters, you can adjust how your preview attachments are generated and maintained. Here are some of the options:

- mimetype - specifies the preview mimetype to be generated. If not specified, assumed to be image/jpeg. However, you could specify other image formats or even other document formats such as application/pdf.

- size - specifies the maximum width of the resulting image (when mimetype is an image format).

- attachment - specifies the attachment on the node to be previewed. If not specified, assumed to be default.

- force - specifies whether the preview attachment should be re-generated in the event it already exists. If not specified, assumed to be false.

- save - specifies whether the generated preview attachment should be saved as a new attachment on the node. If not specified, assumed to be true. If you set this to false, each request will generate the preview anew.

- fallback - specifies a fallback URL that should be 301 redirected to in case the preview does not exist or cannot be generated.

Examples

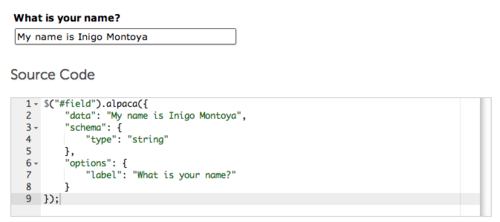

Cloud CMS uses preview generation in many places. Fundamentally, we use this quite extensively in all of our HTML5 front-end applications as a means for generating intuitive user interfaces. Here are a couple of really neat places where it shows up:

Real-time Document Transformations

Suppose we wanted to offer the default attachment (Word document) as a PDF. After all, PDF is a much preferred format for consumption. Businesses tend to prefer Word for the back-office and PDF for the consumer. We can do this by using a link like this:

Multi-Device / Multiple Form Factors

Suppose we want to support lots of form factors. We might want to have a hi-res preview for large form factors (like a kiosk) and much smaller images for devices like an iPhone. We may even want different mimetypes based on bandwidth. We can adjust our URLs accordingly.

Here are a few examples:

Fallback

Sometimes we may not know whether a preview can/could be generated for content. Suppose, for example, that we want to produce a list of nodes. For content-less nodes, we might provide a stock image that use as a placeholder. The preview generation code lets you fallback to a URL of your choosing. You can do it like this:

Conclusion

As Cloud CMS grows and evolves, we’re pushing further into services and capabilities that support live applications. The platform’s document management and transformation services are powerful capabilities that stand ready to service your web and mobile apps!

Are you new to Cloud CMS? If so, consider giving us a try by signing up for a free trial today.