UI feature - Folder Navigation Tree

Jul 27

Cloud CMS now provides a left-hand Folder Tree in the Folders view. Although a relatively simple feature, the left-hand Folder Tree makes navigation between folders much easier and is a familiar way to view and navigate content.

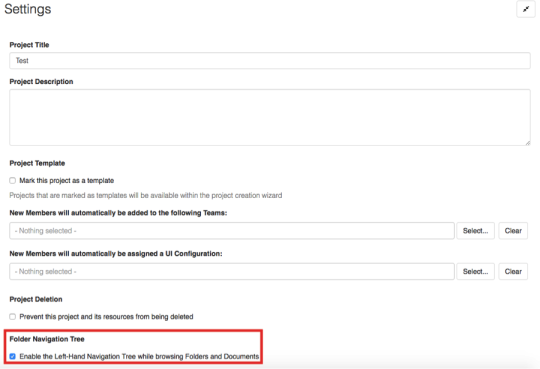

The left-hand Folder tree can be enabled or disabled from the Project Settings page (set to disabled by default).

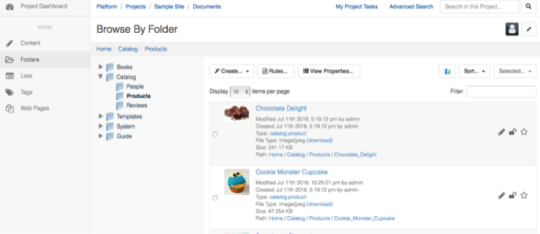

After the left-hand Folder Tree is enabled, it can then be seen under Browse By Folder. If a folder contains one or more folders within it, then there is an arrow pointing towards the folder. If the arrow is clicked on then it turns to point down at the new subset of folders. This hierarchical structure of folders makes it easier to understand where you are and to jump around between locations.

Any questions?

I hope this article encourages you to further explore the many features of Cloud CMS. If you have a question, or cannot find a feature you are looking for, or have a suggestion - please Contact Us.